I Needed a 'Comms With AI' Resource That Didn't Exist. So I Built One Using Claude Code

I needed a Comms with AI template library that didn't exist, so I built one. Here's the workflow – and where each AI tool hit its limits.

How CommsWith.AI went from first functional prototype to public launch – and what the process taught me about building with AI at pace

As someone who, during their career has spent hours and days and weeks and months on the most mundane of technical tasks – hello website migrations! – there's a moment in most AI-assisted builds nowadays where you stop and think: that should not have been that easy.

For me, it came less than 10 hours in, looking at a fully navigable, mobile-responsive, SEO-structured website that had not existed just a few days ago. Templates rendering correctly. Category pages linking through. A working newsletter signup. The skeleton of something both substantial and useful – produced, largely, through a conversation.

That was early February. CommsWith.AI is now live and available to use. But the ten-hour sprint that produced the first version is not really the story. The story is what happened in the two months after.

Why CommsWith.AI exists

Before the build, there was a gap. Communications professionals had plenty of places to read about AI. Applied Comms AI is one of them. What they had fewer of were practical artefacts they could take directly into their work: templates with real AI prompts built in, review checklists that addressed governance rather than just grammar, toolkits that bundled the right resources for a complete workflow.

The SERP (Search Engine Results Page!) confirmed the gap. Smartsheet has templates but no comms nuance. HubSpot gates content behind forms. Agency sites push templates as lead generation rather than as a genuine resource. Nobody had built the integrated operating system for communications professionals who want to use AI properly.

That became the brief for CommsWith.AI as I discussed and landed upon the need with Claude Chat: a template-first resource hub with workflow categories, bundled toolkits, and every template built to a consistent standard – what it is, when to use it, the template itself, a tested AI prompt, a human review checklist, and a real example output.

Not generated and published. Built to a spec, reviewed by myself, and published to a rigorous standard.

The strategic position in the Faur ecosystem was also deliberate:

- Applied Comms AI = Learn. Experiments, frameworks, what actually works.

- CommsWith.AI = Do. Templates, prompts, workflows, ready to use today.

- Faur = Implement. When organisations need this at scale, with expert support.

Every piece of content on Applied Comms AI is meant to point to relevant and useful practical tools and resources. Now it can.

Phase one: getting to functional

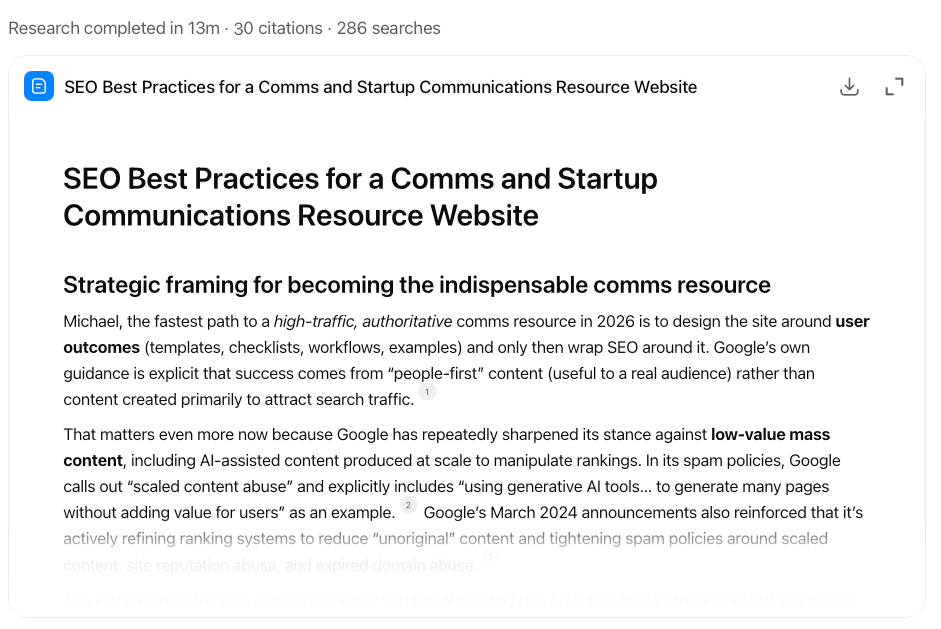

The research phase came first. I used ChatGPT's Deep Research to produce a competitive analysis of the comms template landscape – keyword opportunities, intent classification, and content gap mapping, as well as a technical SEO specification that shaped the information architecture before a line of code was written.

This turned out to matter more than I expected. The research didn't just tell me what to build. Then examining the results in Claude Code and looking at how to implement these, it helped illuminate how to build it so it would compound rather than fragment. A pillar-cluster architecture, with templates organised into category pages that build authority over time rather than competing with each other. An internal linking structure designed as a product feature, not an afterthought. The difference between building a site and building something that earns trust in search.

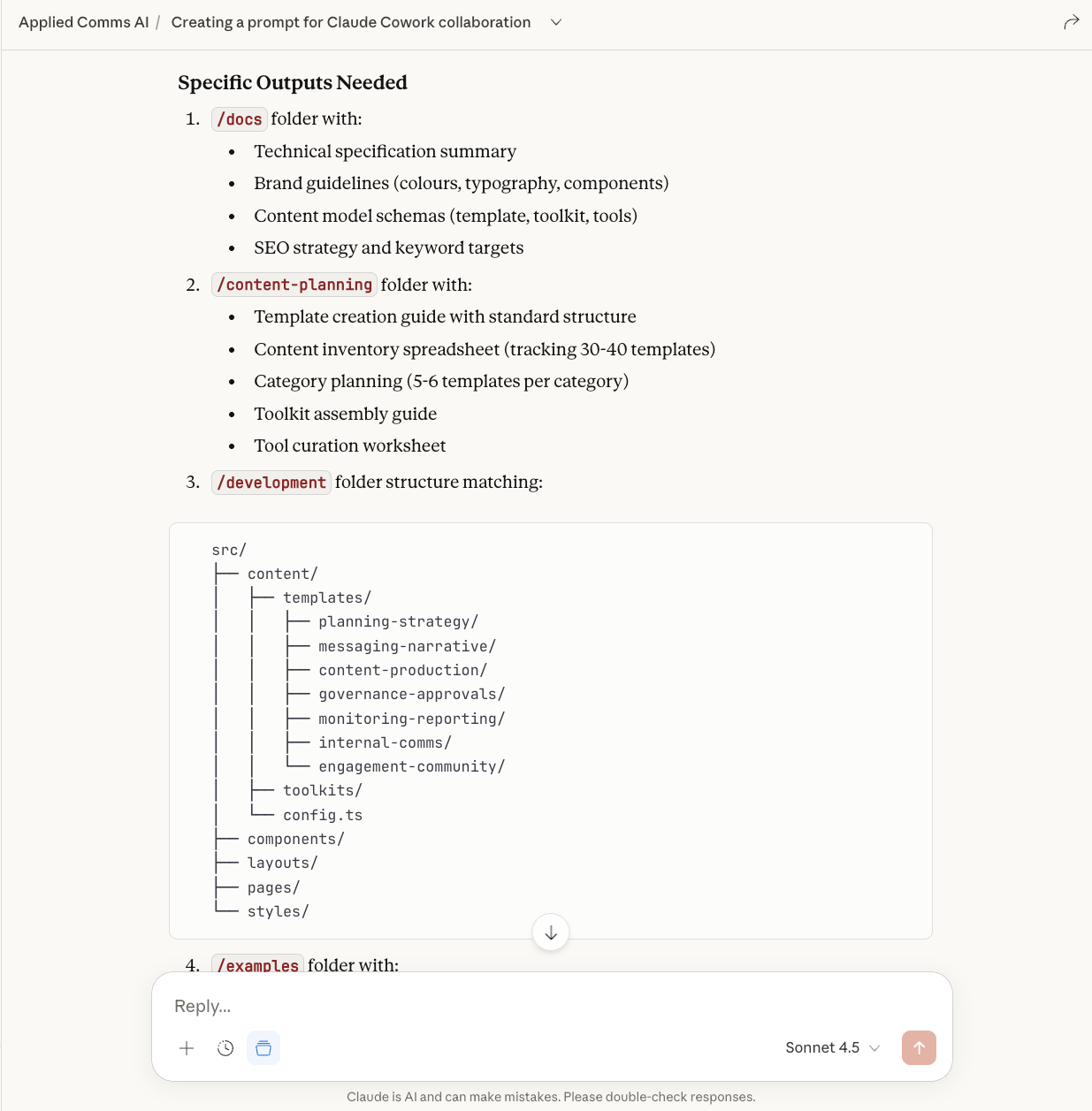

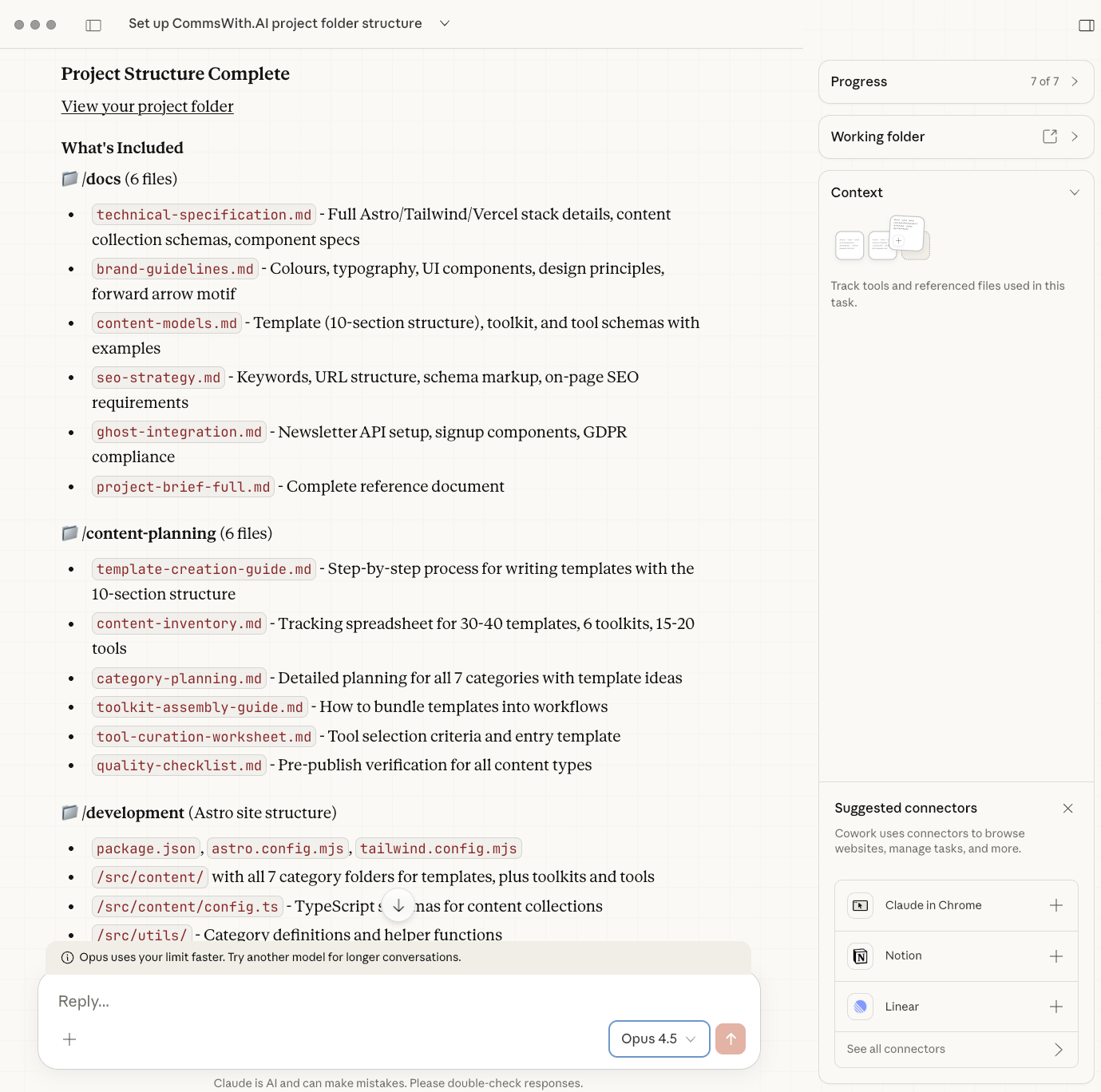

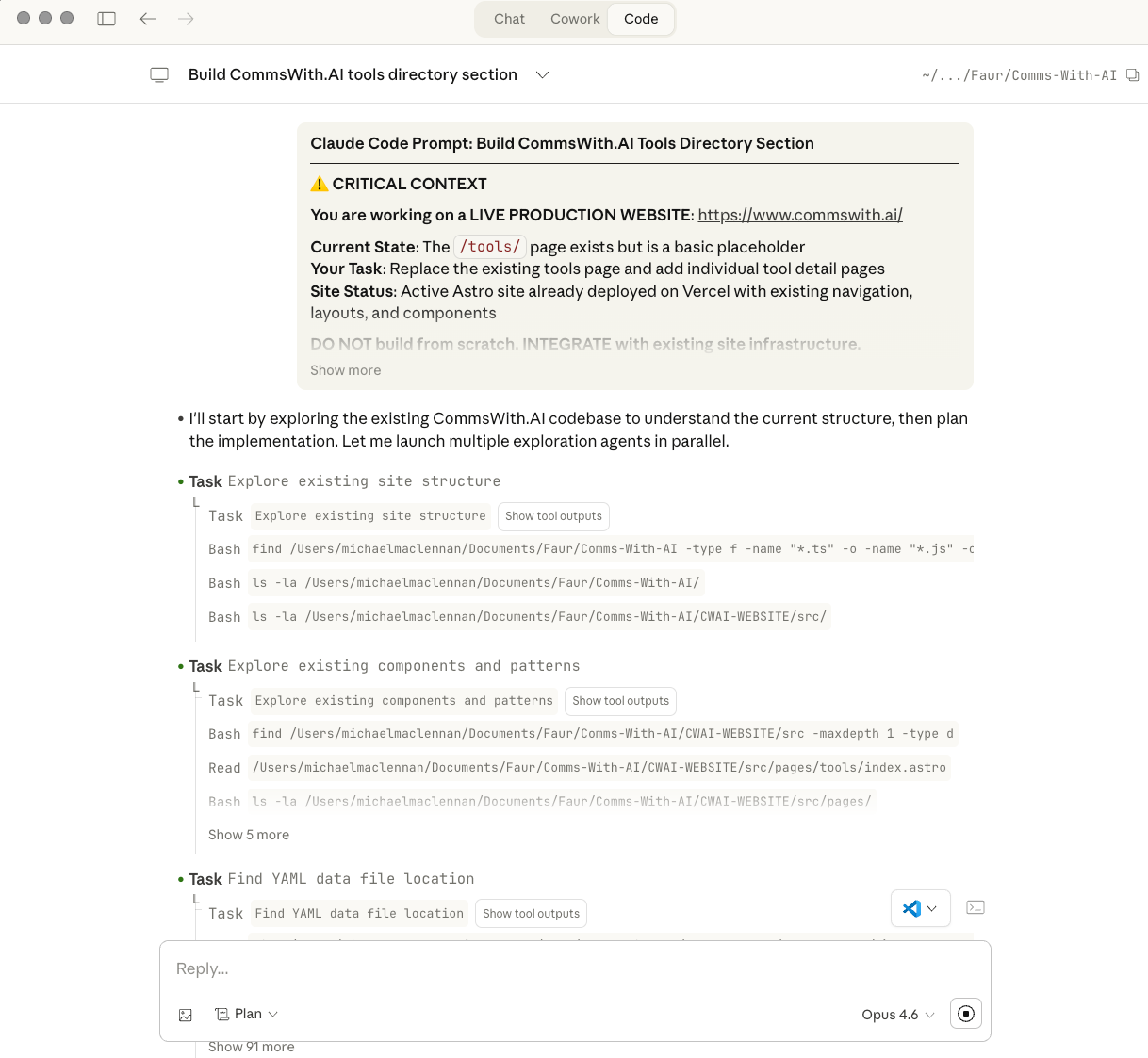

I developed a detailed strategic specification, and with that in hand I switched tools. Claude Code handled the implementation: the Astro framework and Tailwind CSS architecture, the content collection schemas that enforce quality standards across every template, the component library (TemplateCard, PromptBlock, CopyBlock, Checklist, RelatedTemplates), the automatic XML sitemap generation, structured data markup, and mobile-first responsive layout.

If you're lost on what any of that means – don't worry, so was and am I, for the most part. Part of the sea change people have experienced using Claude Code is the ability to implement technical and highly effective solutions that they don't need to understand fully. (This of course does come with some risks, which I go into later.)

The reason for using three tools rather than one wasn't ideology – it was observation. ChatGPT, at the research and synthesis stage, was excellent at comprehensive analysis: building a picture from multiple sources. Claude Chat is great at identifying patterns, and acting as a collaborative partner to outline and define a structured strategic document. Claude Code, at the implementation stage, was more methodical. It showed its working. It caught issues before they became problems rather than after. It was better for iterative refinement, where the task was to take a clear brief and execute it with increasing precision.

The tools have different modes of thinking. Using them for the tasks they're suited to works significantly better than trying to make one tool do everything. That's not a permanent statement about either platform – models change – but it was true throughout this build.

Ten to twelve hours of work produced a basic but fully functional site. Every core page rendered. Every template category navigated. The newsletter signup connected. The infrastructure was sound.

And then the real work started.

Phase two: making it worth using

A functional site and a useful site are not the same thing.

The weeks after the initial build were a series of structured improvement loops, each one adding a layer of rigour that the first version lacked.

UX testing via voice notes. I recorded myself walking through the site, narrating what I was noticing, what felt unclear, what a first-time visitor would encounter. The recordings went into Claude Chat, which translated them into a prioritised list of actionable improvements for Claude Code to implement. This sounds simple. It was surprisingly effective – the discipline of speaking your way through a site forces a different kind of attention than reading through it.

ICP stress-testing. I wrote a full article about this process on Applied Comms AI – the ICP agent methodology is documented there – but the short version is that I built 'Sarah Clarkycat', a Custom GPT persona representing a Head of Communications at a 200-person B2B SaaS company. She reviewed every main page and every template category. Her feedback was specific and often uncomfortable: the homepage made claims without anchoring them in proof; the governance language was too thin for an audience that would need to justify every tool to Legal and InfoSec; the free/paid question was left unanswered when it was one of the first things a visitor would want to know.

The changes that followed weren't cosmetic. Descriptions were rewritten from feature language to outcome language. Review checklists became substantive rather than generic. The homepage added a template preview rather than just promising one. Governance considerations, which Sarah raised unprompted across multiple tests, were woven through the site in ways the initial build had not anticipated.

What this process confirmed: for communications professionals, governance is not a footnote. It's part of the value proposition. Any resource that doesn't address risk isn't addressing the actual job.

The Sprint Implementation Brief. By late February, the accumulated improvements from UX testing and ICP feedback had grown into a structured brief – produced in Claude Chat, implemented in Claude Code. The sprint covered navigation redesign, a workflow finder component, a stat strip, founder credibility signals, toolkit page improvements, and a series of smaller fixes that each individually looked minor and collectively mattered considerably.

What the sprint brief represented was a shift in working method. Instead of translating individual observations directly into code, Claude Cowork (which by this stage had launched) had become the strategic layer between observation and execution – synthesising feedback, setting priorities, and producing a brief that Claude Code could work from systematically.

Technical rigour via Claude's Anthropic plugins. The Superpowers and Code Review plugins added a layer of technical scrutiny that caught issues the conversational build process had not prioritised: performance optimisation, accessibility checks, code quality standards. Alongside this, a thread about website optimisation from social media went into Claude Chat for review against the site's current state, producing another round of targeted suggestions.

Design refinement. The Faur website was used as a design reference for closer visual alignment. Claude Code produced updated homepage images to replace placeholder visuals.

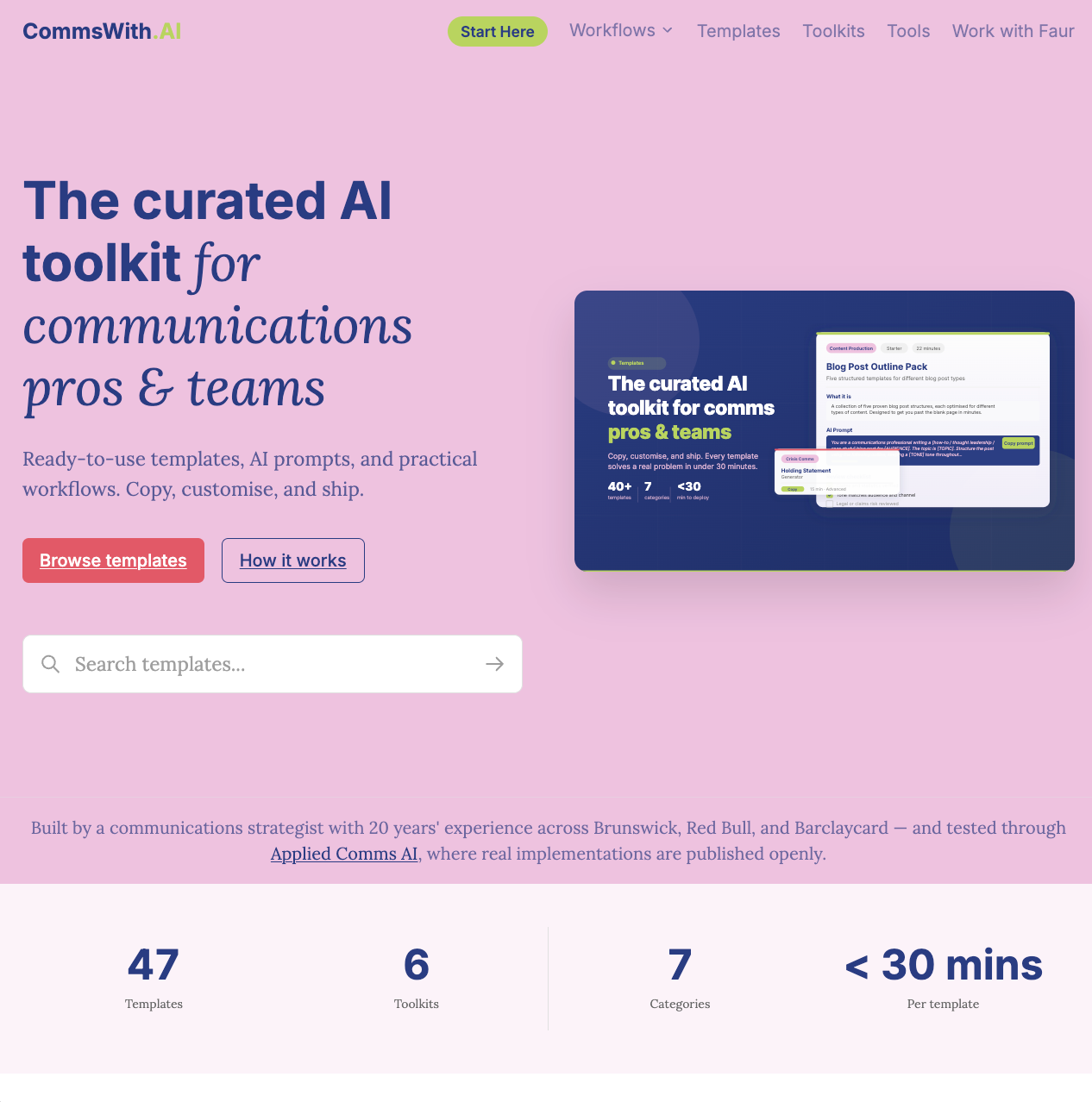

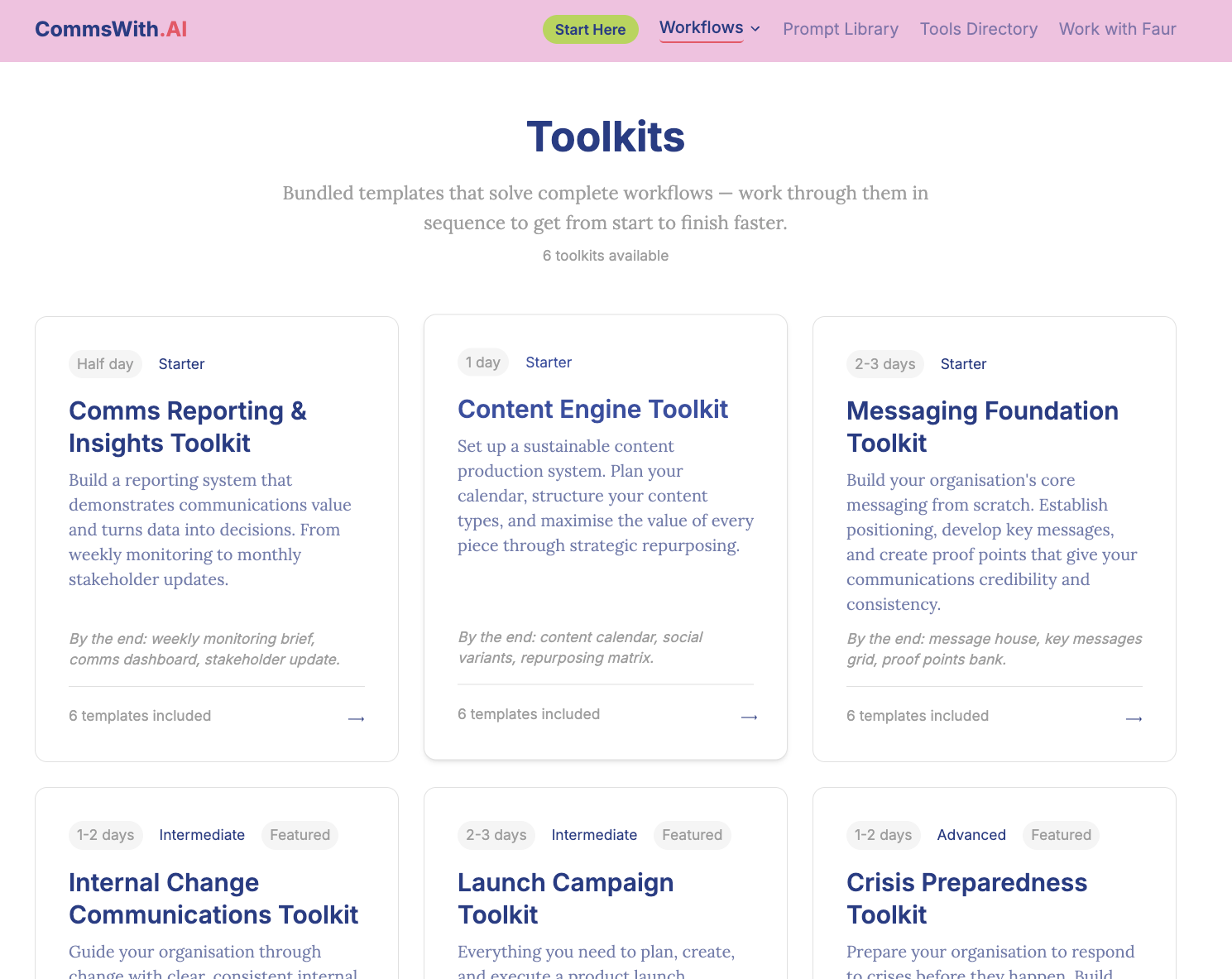

The result, across all of this iteration, is currently a site with 47 templates across seven workflow categories and six bundled toolkits, published as of this article.

What the "no failures" finding actually means

One of the questions I ask myself when documenting any build is: what went wrong? It's the most useful part of the story, and it's what makes Applied Comms AI worth reading rather than just another AI success narrative.

The honest answer here is that nothing went wrong in the way things went wrong eighteen months ago with 'vibe coding' tools. There were no broken builds, no hours lost to debugging a problem the tool had introduced, no moments where the approach had to be abandoned and restarted.

That is itself significant. The floor for AI-assisted development has risen substantially. Anyone with clear requirements and a systematic approach can build a functional, well-structured resource site. The tools are capable enough that basic execution is no longer the constraint.

Which raises the question I've been sitting with since the site went live: if anyone can build this, what is the actual moat?

My answer, for now, is the content – but more precisely, the professional judgement embedded in the content. Every template on CommsWith.AI reflects years of communications work. The review checklists are not generic. The AI prompts have been tested against real briefs. The governance language exists because I've had to justify claims to legal teams and manage spokespeople who wanted to say things that couldn't be substantiated. The information architecture reflects how communications workflows actually connect, not how someone without comms experience would guess they do.

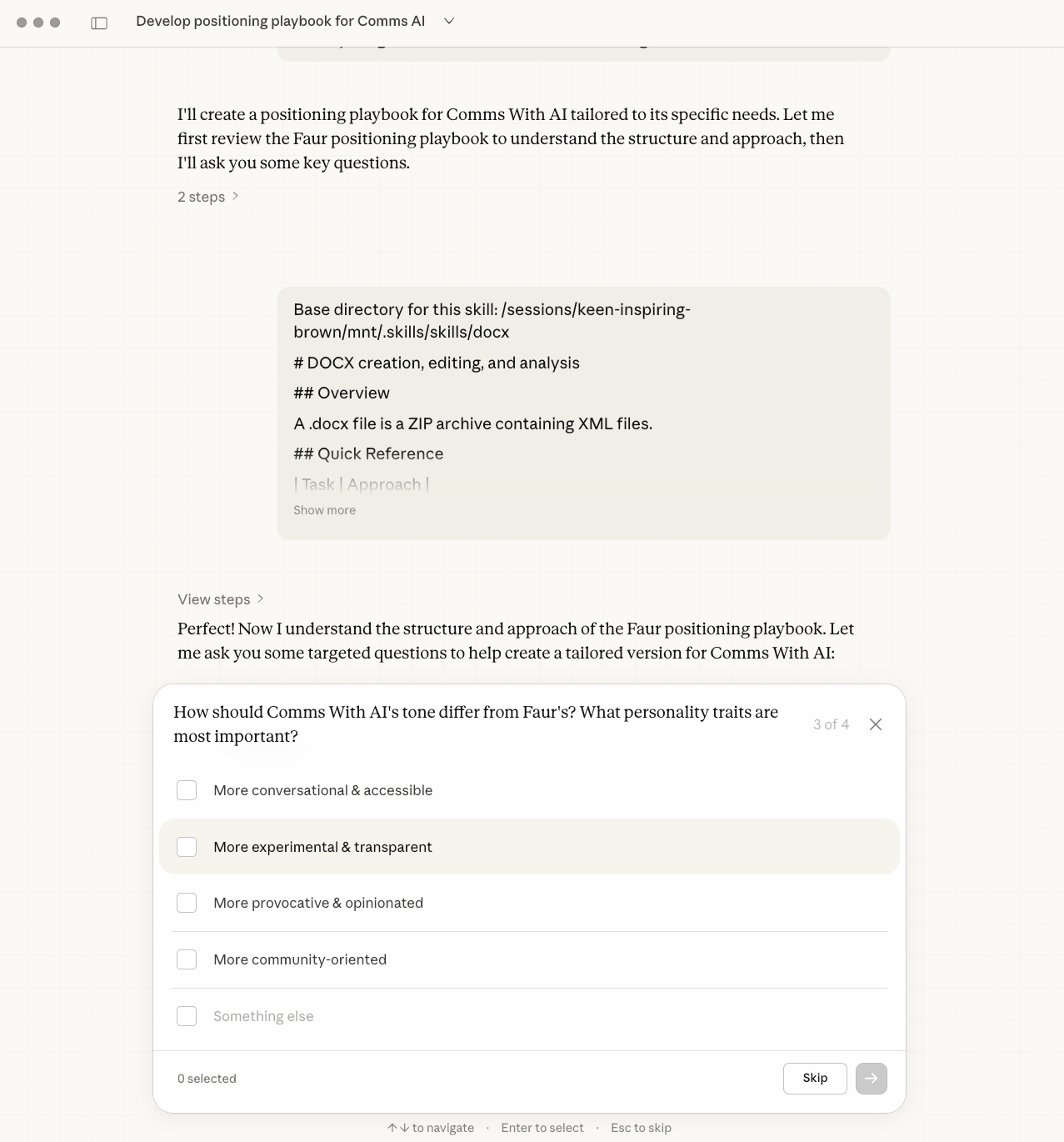

As part of this, I worked further on the underlying structure for both CommsWith.AI as well as the strategies for Faur and Applied Comms AI, developing detailed playbooks which could govern how we would move forward and develop this nascent ecosystem.

A non-communications person could build a site with the same structure. They could not build the same content. That distinction matters more as the build tools become more capable – the quality of professional judgement embedded in what gets built becomes the differentiator, not the technical execution.

How much that matters in practice, I won't know until the site has been in active use for longer. That uncertainty is part of the experiment, and I'll return to it in a future piece on Applied Comms AI as usage data starts to accumulate.

What CommsWith.AI is now

The site currently carries 47 templates across seven categories – Planning & Strategy, Messaging & Narrative, Content Production, Governance & Approvals, Monitoring & Reporting, Internal Comms, and Engagement & Community – and six bundled toolkits: Launch a Campaign, Executive Comms Pack, Risk & Governance Starter, Internal Update, Monitoring to Action, and Small Business Comms Hygiene.

Every template follows the same structure: what it is, when to use it, the inputs needed, the template itself in copyable blocks, a tested AI prompt with variations, a human review checklist, and an example output. The consistency is deliberate – it means every template delivers the same level of utility, and the content collection schema enforces it technically so that a template can't be published without the required sections.

The toolkits bundle templates into end-to-end workflows. Launch a Campaign takes you from brief to measurement in about 90 minutes. The Executive Comms Pack prepares a spokesperson for external communications. Risk & Governance Starter establishes the governance foundations most communications teams lack. Each toolkit includes a time breakdown and a statement of what you'll have at the end – a direct response to one of Sarah Clarkycat's most consistent pieces of feedback.

The site is built on Astro, hosted on Vercel, and deliberately lightweight. Pages load fast. Templates are readable on mobile. The copy is in UK English throughout.

It's free to use. There is no paywall, no email gate on templates, no account required.

Each month I plan to add features and functionality, so stay tuned on this! There is already a substantial roadmap for 2026, but this remains open to change. In one sense, the challenge has been not to go overboard, but to keep the launch version lean and flexible, then to move in whichever direction feels best based on analytics and audience feedback.

The workflow that made this possible

Looking back across the full build, the pattern that emerges is not one tool or one technique — it's a loop.

- Research and strategy in ChatGPT. Competitive analysis, keyword mapping, architectural decisions. The outputs informed what to build before any building began.

- Observation converted to briefs via Claude Chat. Voice notes became instructions. ICP feedback became sprint tasks. Social media threads became improvement lists. Claude Chat acted as the strategic translation layer between raw observation and actionable brief.

- Implementation in Claude Coworked. Architecture, components, schemas, technical SEO. Methodical, iterative, traceable, with everything contained within my own laptop's hard drive (and back-ups maintained just in case).

- Briefs executed in Claude Code. Systematic implementation against a clear specification, rather than ad hoc changes driven by individual insights.

- Technical quality checked via Claude plugins. Superpowers and Code Review added rigour that conversational development wouldn't naturally prioritise.

Each tool handled what it was suited to. The integration between them – the human judgement that decided when to switch and what to bring from one tool to the next — was where most of the real work happened.

What this means for communications professionals

The most practical takeaway from this build is not the specific toolchain – it will be different in six months. It's the working method.

Separating research from implementation, and using different tools for different types of thinking, produces better results than trying to make one tool do everything. Building an explicit quality layer into the process – through ICP testing, structured UX review, and technical audit – catches the things that conversational development naturally misses. And treating the AI as a collaborator rather than an oracle means the professional judgement stays in the loop at every stage.

The 10-12 hours that produced the first functional version was useful. The two months that followed – a few hours a week, systematic and structured – are what made it worth using.

If you haven't already visited, CommsWith.AI is live. Take a look, use whatever is useful, and let me know what's missing. The content roadmap grows from requests, and there are currently more templates and features in development. As with the AI tools used for this build, the potential is already enormous, and will only grow.

- Applied Comms AI documents what actually works in AI implementation for communications professionals – including what doesn't. Subscribe at appliedcomms.ai.

Next up: AI Agents for Comms Leaders – masterclass webinar series

A six-part Applied Comms AI masterclass series on building agent-based workflows for communications teams – from planning and production through to governance, monitoring, and organisational change.

View sessions and register, with a limited-time 50% intro session discount: https://www.appliedcomms.ai/events/

Work With Faur / Applied Comms AI

Applied Comms AI helps communications teams move from AI experimentation to operational value. Through Faur, we offer workflow audits, implementation consulting, and capability-building workshops – grounded in the same hands-on approach you see in this content. If you're exploring how AI could transform your communications practice, drop us a line at info@faur.site or book a consultation session.